Blocking Exploitable Content

Tuesday 7th February 2017

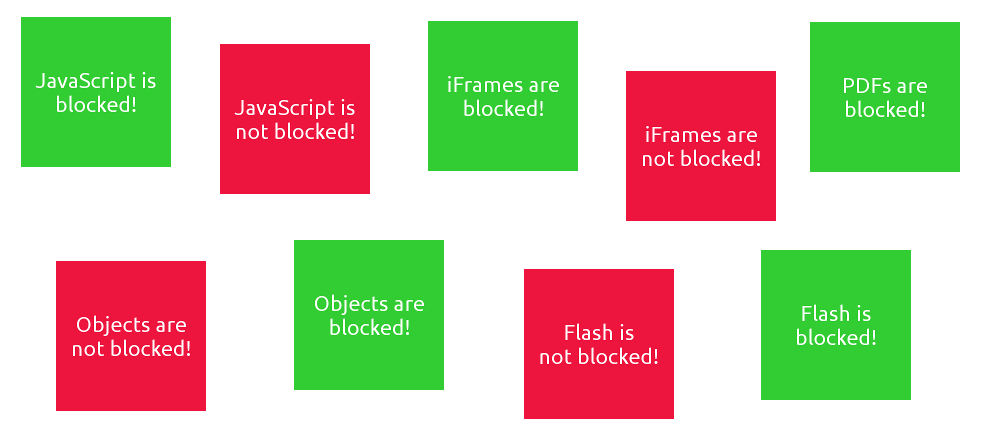

I recently added a new project page to my site: the Exploitable Web Content Blocking Test. This is a merger of several ideas, and I created it in order to have an easy way to test several aspects of your browser's security. It is not an advert/tracker blocking test, plenty of those already exist.

The blocking tests work by having two images placed directly on top of each other using CSS. The bottom image is a standard img tag, but the top image is placed using whatever type of content it is testing for. For example, the iFrame blocking test displays the top image with an iFrame, and the PDF blocking test displays it in a PDF file. The bottom image is green, and indicates that the content is successfully blocked. The top image is red and indicates that the content is not blocked.

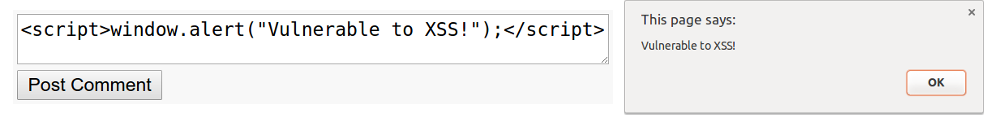

The use of JavaScript opens up a lot of potential attack vectors in your browser. From cross-site scripting (XSS), cross-site request forgery (CSRF) and more recently, self inflicted cross site scripting (self-XSS).

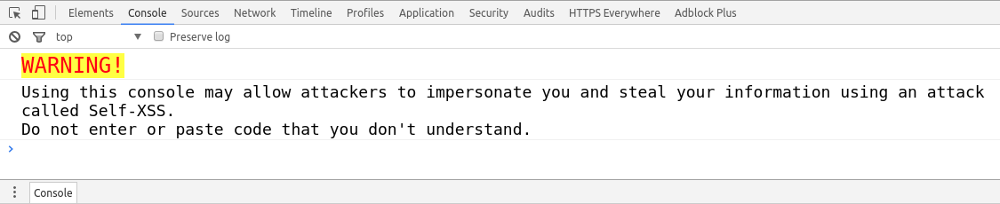

Self-XSS is where a user pastes malicious JavaScript into their web browser developer console. It works in a similar way to phishing attacks, where the user is persuaded by an adversary to perform an action that is unknowingly harmful to themselves.

Generally, they will come across code that claims to do something positive for them. For example: "Paste this into your console for free Amazon Prime membership!", or "Paste this into your console to see who has viewed your Facebook photos!". Obviously both of these are impossible and far to good to be true, but people still fall for it. The malicious code can then do pretty much anything in your browser, including downloading malware, changing your account passwords or sending your autofill information back to the attacker.

Many websites now display warnings in the developer console on pages containing sensitive content:

The main reason for having JavaScript blocked by default is to defend against these attack vectors. Even though the site itself may be trustworthy, a badly designed comment form could allow malicious JavaScript to be executed in your browser. JavaScript is also responsible for loading many adverts, trackers and setting cookies.

Edit 7th Feb 2017 @ 5:59pm: What a coincidence! Just a few hours after I published this blog post, a serious XSS vulnerability was discovered on Steam profiles. The title section of the "Featured Guides" profile showcase allowed malicious JavaScript to be inserted and executed on any page that loads it. This was patched quickly by Valve, but many people have already been affected by it. Read more here and here.

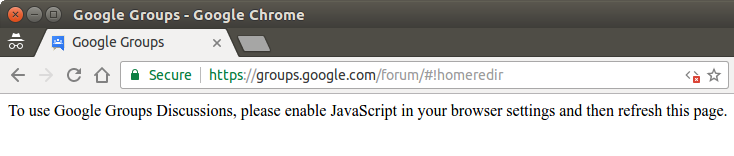

It's unfortunate that many websites do not even have basic functionality with JavaScript disabled. Of course I don't expect the fancy animated menus and interactive slide shows to work, but it's extremely frustrating when a site will not even display the main page content. Google Groups is a notable example of this:

Other sites handle the blocking of JavaScript in a much better way. For example, Stack Exchange sites have an unobtrusive message letting you know that their site works better with JavaScript enabled. You don't have to enable it to read the basic page content, but if you want to log in, vote, or post your own questions and answers, you'll need to enable it, which is fair enough.

iFrames suffer the same issues. I don't even want to talk about sites that load their entire page content through an iFrame, but one minorly annoying thing is that Google reCAPTCHAs load through an iFrame. This means that you'll have to whitelist any site where you need to solve a reCAPTCHA, which includes many sign-up forms and comment sections.

I was also going to have a test for unencrypted HTTP requests. I decided against this since I could not find a way to do it without causing a mixed content SSL error on my site.

The original plan was to load the red image over HTTP by specifying that within the src attribute. Not surprisingly, this would give a mixed content SSL error (URL bar displays grey instead of green). Even if I were to go ahead with this, HTTPS Everywhere was not reliably blocking unencrypted connections when I set it to. I am not sure what caused this, but it made the blocking test unreliable and invalid. I will do more testing and see if I can find a way to reliably reproduce the problem.

Another issue that I encountered was that the web objects blocking test conflicted with some of the others, mainly PDFs. PDFs are a form of web object, so this is not surprising. Blocking web objects also blocks PDFs, even if PDFs are not specifically blocked. I guess there is no harm in this, since as long as they are blocked somehow, everything is fine.